Organizations are increasingly turning to knowledge-based AI applications to unlock the value of their internal data. From legal research and customer support to pharmaceutical discovery and enterprise search, the need for accurate, context-aware responses has elevated demand for Retrieval-Augmented Generation (RAG) platforms. Among these, frameworks like Haystack stand out for enabling teams to build AI systems that combine large language models (LLMs) with structured and unstructured knowledge sources in a controlled, scalable way.

TLDR: Retrieval-Augmented Generation (RAG) platforms such as Haystack allow organizations to build AI applications that ground large language model outputs in trusted data sources. By combining document retrieval with generative models, these tools improve accuracy, transparency, and relevance. They are particularly valuable for enterprises managing large volumes of proprietary information. With modular architecture and integration capabilities, Haystack and similar platforms make knowledge-based AI both practical and scalable.

At its core, Retrieval-Augmented Generation addresses a fundamental limitation of standalone language models: they generate responses based on learned patterns, not real-time knowledge retrieval. This can lead to outdated information, hallucinations, or vague answers. RAG platforms introduce an additional retrieval layer that searches a defined knowledge base before generation, grounding outputs in verifiable data.

Understanding Retrieval-Augmented Generation

RAG operates in two primary stages:

- Retrieval: The system searches databases, document repositories, or vector stores to find relevant information.

- Generation: A language model uses the retrieved context to produce a coherent, context-aware response.

This approach ensures responses remain aligned with authoritative documents rather than relying solely on pretrained knowledge.

For enterprises, this architecture offers significant advantages:

- Improved Accuracy: Responses are grounded in company-approved documents.

- Reduced Hallucination Risk: By referencing real sources, speculative answers are minimized.

- Transparency: Many systems can provide citations or source excerpts.

- Up-to-Date Knowledge: Information remains current as databases evolve.

Haystack as a Leading RAG Framework

Haystack is an open framework designed to help developers create production-ready question answering, semantic search, and conversational AI systems. Its modular design allows teams to plug in different retrievers, document stores, and generation models based on performance and scalability requirements.

What differentiates Haystack is its emphasis on flexibility and enterprise readiness:

- Support for various vector databases and document stores.

- Integration with multiple LLM providers.

- Pipeline-based architecture for customizable workflows.

- Deployment options ranging from local installations to cloud infrastructure.

Rather than imposing a rigid structure, Haystack allows architects to assemble components tailored to their domain, whether legal contracts, medical literature, financial reports, or internal HR documentation.

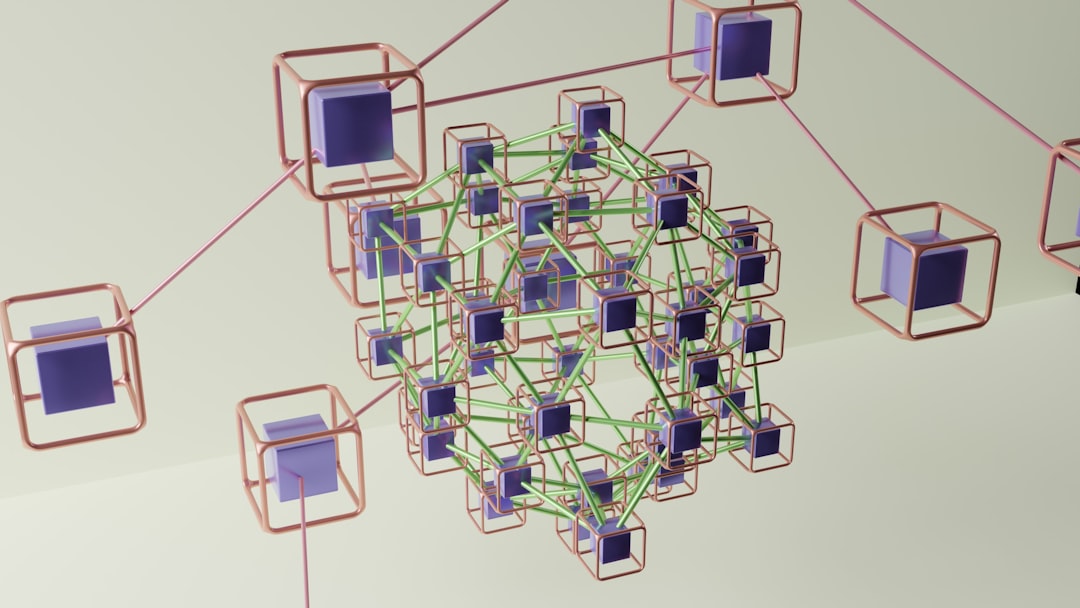

Core Components of Knowledge-Based AI Apps

Building a robust RAG application involves several interconnected components:

- Document Ingestion: Data must be parsed, cleaned, and indexed.

- Embedding and Indexing: Text is transformed into vector representations for semantic search.

- Retriever: Responsible for identifying the most relevant documents.

- Reader or Generator: Produces answers based on retrieved context.

- Evaluation and Monitoring: Ensures quality, performance, and compliance over time.

Haystack’s pipeline architecture makes these stages explicit and configurable. Developers can adjust ranking thresholds, reranking strategies, and prompt templates to optimize accuracy.

Enterprise Use Cases

RAG platforms are particularly valuable in environments where precision and traceability matter. Common applications include:

- Customer Support Automation: Responding with answers grounded in policy documents and knowledge bases.

- Internal Knowledge Search: Assisting employees in navigating complex documentation.

- Regulatory and Compliance Assistance: Providing referenced explanations from legal texts.

- Technical Documentation Querying: Enabling engineers to quickly find detailed specifications.

In each case, the combination of retrieval and generation provides not only fluent responses but also organizational control over information sources.

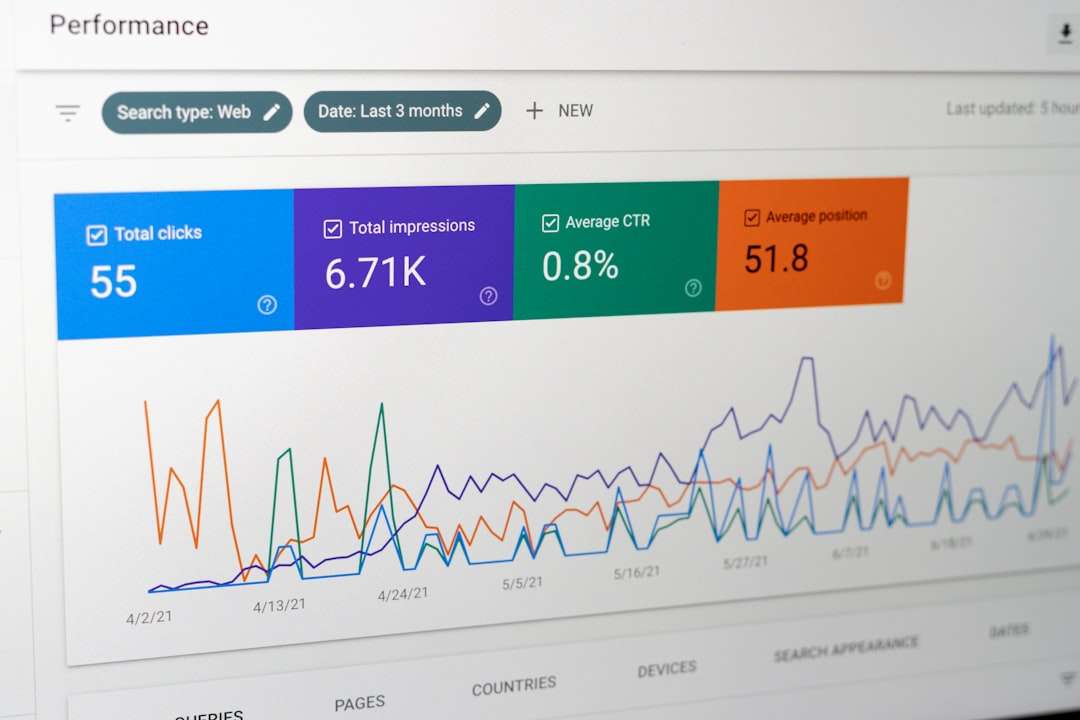

Comparison of Leading RAG Platforms

While Haystack is a prominent framework, it operates within a broader ecosystem. Below is a comparison of several well-known RAG-enabling tools:

| Platform | Architecture Style | Customization Level | Enterprise Readiness | Primary Strength |

|---|---|---|---|---|

| Haystack | Modular pipeline | High | Strong | Flexibility and production focus |

| LangChain | Chain based orchestration | High | Moderate | Rapid prototyping and integrations |

| LlamaIndex | Index centric framework | Moderate to High | Growing | Data connectors and indexing tools |

| Managed Cloud RAG Services | Prebuilt workflows | Lower | Very Strong | Simplified deployment and scaling |

While frameworks like LangChain emphasize experimentation and orchestration, Haystack’s structured pipeline model often appeals to teams seeking maintainability and structured deployment.

Governance, Security, and Compliance

For serious organizations, governance is as important as performance. Knowledge-based AI must align with data security standards and regulatory frameworks. RAG platforms contribute to compliance in several ways:

- Controlled Knowledge Sources: Only approved repositories are indexed.

- Access Control Integration: User permissions can restrict document visibility.

- Auditability: Document citations allow traceability.

- Localized Deployment: Options for on premises hosting protect sensitive data.

This level of oversight is particularly important in industries such as finance, healthcare, and government, where inaccuracies or data exposure carry significant risk.

Implementation Best Practices

Deploying a RAG system requires more than technical assembly. Organizations should adopt several best practices:

- Define Clear Knowledge Boundaries: Identify authoritative sources before indexing.

- Test Retrieval Quality: Evaluate semantic similarity thresholds.

- Measure Answer Grounding: Verify that outputs are supported by citations.

- Monitor Performance Continuously: Update embeddings and retrain ranking mechanisms as needed.

- Iterate Prompt Engineering: Refine prompt templates for clarity and alignment.

Continuous evaluation ensures that the system remains reliable as document corpora expand or organizational policies evolve.

The Strategic Value of Knowledge-Based AI

RAG platforms signal a shift from generic AI to domain-aware intelligence. Instead of relying on static pretrained knowledge, enterprises can build AI systems deeply embedded in their own operational context.

This capability offers long-term advantages:

- Enhanced employee productivity.

- Faster onboarding and training support.

- Reduced operational friction.

- Improved customer trust through accurate answers.

Frameworks like Haystack provide the infrastructure necessary to operationalize this vision. By combining retrieval precision with generative fluency, they transform static document repositories into dynamic, conversational knowledge systems.

Conclusion

Retrieval-Augmented Generation platforms have become foundational to modern knowledge-based AI development. Haystack, with its modular architecture and production-oriented design, exemplifies how organizations can construct reliable, scalable AI systems grounded in trusted information.

As AI adoption deepens across industries, the emphasis will increasingly shift from generative novelty to accuracy, governance, and contextual relevance. RAG platforms deliver precisely these qualities. For enterprises seeking to build serious, trustworthy AI applications, they represent not merely a technical enhancement but a strategic investment in long-term intelligence infrastructure.