As large language models (LLMs) become deeply integrated into customer support, coding assistants, enterprise search, and workflow automation, prompt injection attacks have emerged as one of the most serious security threats. A single malicious string hidden inside user input, web content, or documents can manipulate model behavior, override system instructions, or exfiltrate sensitive data. To mitigate these risks, organizations are increasingly adopting LLM guardrails tools that monitor, filter, and secure model interactions.

TLDR: Prompt injection is a growing security risk that can manipulate LLM outputs and expose sensitive information. Guardrails tools like Rebuff, Lakera Guard, Guardrails AI, Azure AI Content Safety, and Protect AI help detect and prevent malicious prompts. These tools use policy enforcement, input scanning, output validation, and real-time monitoring to protect AI applications. Implementing a layered defense strategy significantly reduces LLM vulnerability.

Below are five LLM guardrails tools similar to Rebuff that help prevent prompt injection and ensure safer AI deployments.

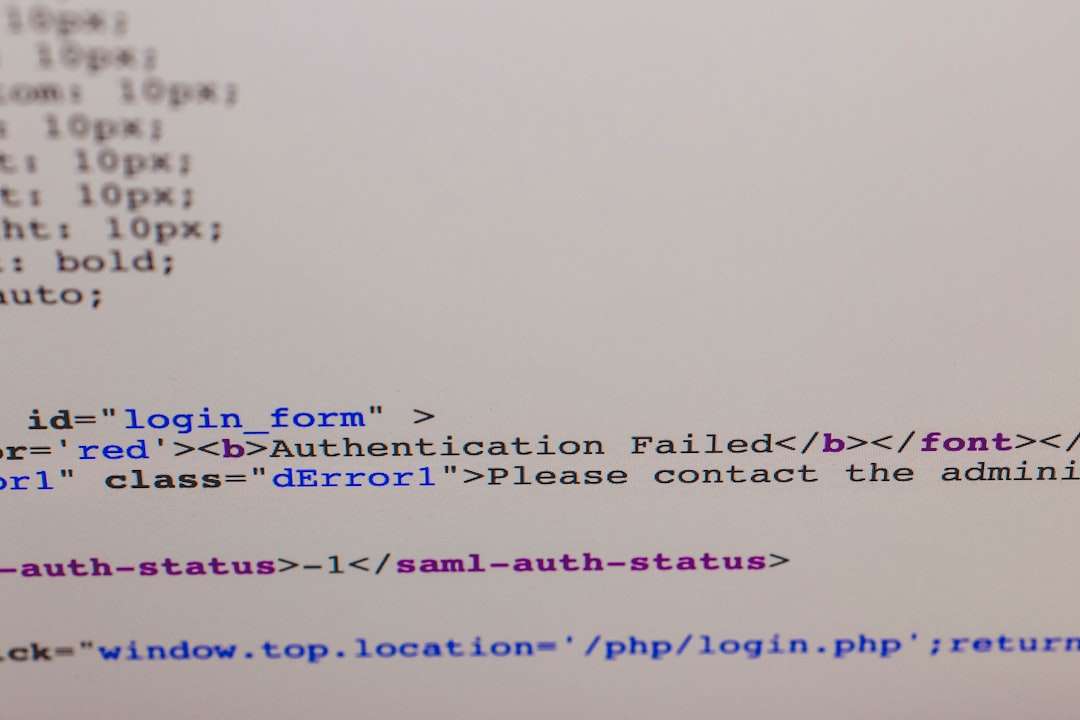

Why Prompt Injection Is So Dangerous

Prompt injection occurs when malicious instructions are embedded into user inputs or external content to manipulate an LLM’s response. Because language models prioritize natural language instructions, they can be tricked into:

- Revealing system prompts or internal configurations

- Exposing confidential business data

- Executing unintended actions in connected systems

- Bypassing content moderation restrictions

Unlike traditional software vulnerabilities, prompt injection targets the reasoning layer of AI systems. This makes it a unique challenge requiring specialized mitigation tools.

1. Rebuff

Rebuff is purpose-built to defend against prompt injection attacks. Designed specifically for LLM applications, it adds a detection and filtering layer between users and the model.

Key Features:

- Prompt injection detection engine

- Heuristic and ML-based filtering

- Configurable security rules

- Input and output validation

Rebuff works particularly well for applications where LLMs interact with external content sources, such as web browsing agents or document retrieval systems. It flags suspicious instructions like attempts to override system prompts or retrieve hidden data.

Best suited for: Startups and enterprises building LLM-powered chatbots, AI agents, and document analysis tools.

2. Lakera Guard

Lakera Guard focuses on real-time detection of prompt injection and data leakage attempts. Its specialization lies in identifying adversarial inputs before they reach the LLM.

Key Features:

- Real-time injection detection

- Adaptive policy enforcement

- Sensitive data exposure monitoring

- API-based integration

Lakera Guard uses advanced classification models to detect patterns indicative of prompt manipulation. It evaluates both user queries and retrieved external documents, making it highly effective in retrieval-augmented generation (RAG) systems.

Best suited for: Enterprise AI teams deploying complex AI workflows that combine internal data, user input, and third-party content.

3. Guardrails AI

Guardrails AI offers a flexible framework for validating LLM inputs and outputs against predefined schemas and policies. While broader than prompt injection protection, it plays a critical role in securing AI pipelines.

Key Features:

- Input/output validation schemas

- Custom rule definitions

- Output formatting controls

- Policy-based enforcement

Developers can define strict constraints on what the model is allowed to produce. For example, if an LLM is expected to return structured JSON data, Guardrails AI ensures compliance—preventing malicious manipulations from altering output formats or embedding hidden instructions.

Best suited for: Developers who need structured output enforcement alongside injection mitigation.

4. Azure AI Content Safety

Azure AI Content Safety provides content filtering, threat detection, and risk scoring for AI applications. Although not solely focused on prompt injection, its moderation and classification capabilities contribute to injection defense.

Key Features:

- Content categorization and risk scoring

- Customizable policy thresholds

- Multilingual coverage

- Enterprise-grade scalability

By scanning inputs before they reach the LLM, Azure AI Content Safety helps identify malicious or manipulative content patterns. Organizations already using Azure infrastructure benefit from seamless integration.

Best suited for: Enterprises leveraging the Microsoft ecosystem for AI deployment.

5. Protect AI

Protect AI takes a broader ML security approach, covering not only prompt injection but also model supply chain vulnerabilities and runtime risks.

Key Features:

- AI threat detection lifecycle management

- Model integrity monitoring

- Runtime anomaly detection

- Compliance and governance tooling

Protect AI emphasizes operational security and governance. Its monitoring capabilities detect abnormal prompt behavior and suspicious output patterns that may signal injection attempts.

Best suited for: Regulated industries such as finance, healthcare, and government sectors.

Comparison Chart

| Tool | Primary Focus | Real-Time Detection | Policy Customization | Best For |

|---|---|---|---|---|

| Rebuff | Prompt injection detection | Yes | Moderate | LLM apps, AI agents |

| Lakera Guard | Injection and data leakage protection | Yes | High | Enterprise RAG systems |

| Guardrails AI | Schema and output validation | Partial | High | Developers needing structured outputs |

| Azure AI Content Safety | Content moderation | Yes | High | Azure enterprises |

| Protect AI | Full AI security lifecycle | Yes | High | Regulated industries |

Best Practices for Preventing Prompt Injection

While guardrails tools are powerful, they are most effective when combined with secure AI engineering practices.

- Separate system and user prompts strictly

- Sanitize and preprocess external content

- Limit model access to sensitive data

- Implement role-based access controls

- Continuously monitor model outputs

A layered defense strategy—combining input validation, output filtering, runtime monitoring, and human oversight—offers the strongest protection.

The Future of LLM Guardrails

As LLMs gain more autonomy through AI agents and tool-calling capabilities, the attack surface expands dramatically. Guardrails will increasingly incorporate:

- Behavioral anomaly detection

- Agent action sandboxing

- Automated red-teaming

- Adaptive adversarial defenses

Organizations deploying AI at scale should treat prompt injection defense as a core component of AI architecture—not an afterthought. The tools discussed above represent some of the most effective solutions currently available.

Frequently Asked Questions (FAQ)

1. What is prompt injection in LLMs?

Prompt injection is a security attack where malicious instructions are inserted into user inputs or external content to manipulate an LLM’s behavior, override its rules, or extract sensitive data.

2. Why are traditional security tools not enough?

Traditional security tools focus on network or code-level vulnerabilities. Prompt injection targets the language reasoning layer, requiring specialized detection systems designed for AI workflows.

3. Are LLM guardrails tools difficult to integrate?

Most modern guardrails tools provide APIs and SDKs for straightforward integration into AI pipelines. Complexity varies depending on system architecture and customization needs.

4. Can guardrails completely eliminate prompt injection?

No tool can guarantee 100% protection. However, combining guardrails with secure design practices significantly reduces risk and improves resilience.

5. Which tool is best for small businesses?

Smaller teams often prefer focused tools like Rebuff or Guardrails AI due to simpler deployment and targeted feature sets. Enterprises may benefit from comprehensive platforms like Lakera Guard or Protect AI.

6. Is prompt injection only a concern for public chatbots?

No. Any LLM connected to internal databases, APIs, or automated systems can be exploited through injection attacks, making prevention essential for both public and private deployments.